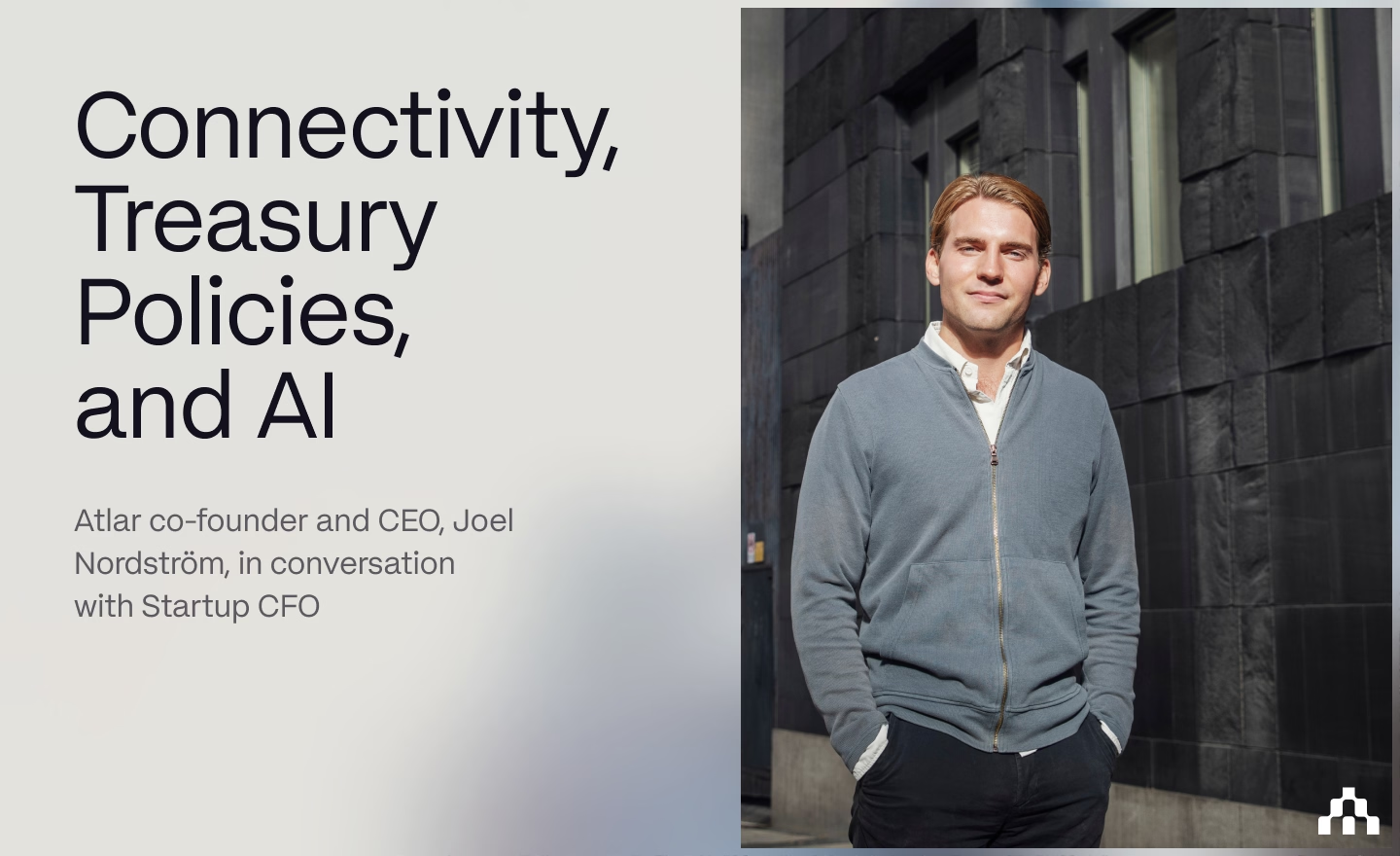

AI in Finance Needs Accountability

In our last post, we covered why a lot of AI tools in finance have underwhelmed up to now, and that reliable data is the starting point. There's a second challenge, though, and it's more fundamental: AI is probabilistic, and finance work is deterministic.

Modern AI models don't follow rigid, predefined rules. They often produce different outputs given similar inputs, and can't fully explain how they got there. This sits in obvious tension with finance work, where every output needs to be auditable, reproducible, and traceable.

Who signs off?

Finance teams work under strict compliance frameworks like SOX, IFRS, GAAP, and internal controls. They may appear abstract, but in fact boil down to personal accountability: the CFO signs off on the annual report; treasurers are personally responsible for liquidity; controllers own accuracy. Before relying on AI for material decisions, a finance professional has to ask: can I stake my career on this?

Reliable data makes the output more accurate, which helps, but only gets you part of the way. You also need to know that the AI is working within your company's rules, and you need a clear record of how it got to the recommendation. Without that, the output won't pass an audit, nor would anyone be willing to put their name to it.

The treasury policy

No one is suggesting finance teams should avoid AI altogether; the controls just need to catch up.

Most teams follow some sort of corporate treasury policy, whether that's a formal document or a set of vaguely agreed-upon assumptions. They can be pretty specific: minimum liquidity buffers, counterparty exposure caps, payment approval thresholds. They also tend to live in a PDF somewhere, enforced manually and only verified after the fact.

Now, imagine if these policies were machine-readable. AI agents can reference them when recommending actions, and the reviewer can validate the agent's decision-making against the same policy. When something falls outside policy, the agent escalates to the reviewer rather than proceeding. The treasury policy becomes a set of rules that AI is held accountable to, not just a document that humans are expected to remember.

All of this depends on the AI having the right context. A treasury policy that caps counterparty exposure at 15% is only enforceable if the AI can see your full position across every bank and entity. Which is why AI in finance has to start with data and connectivity.

The audit chain

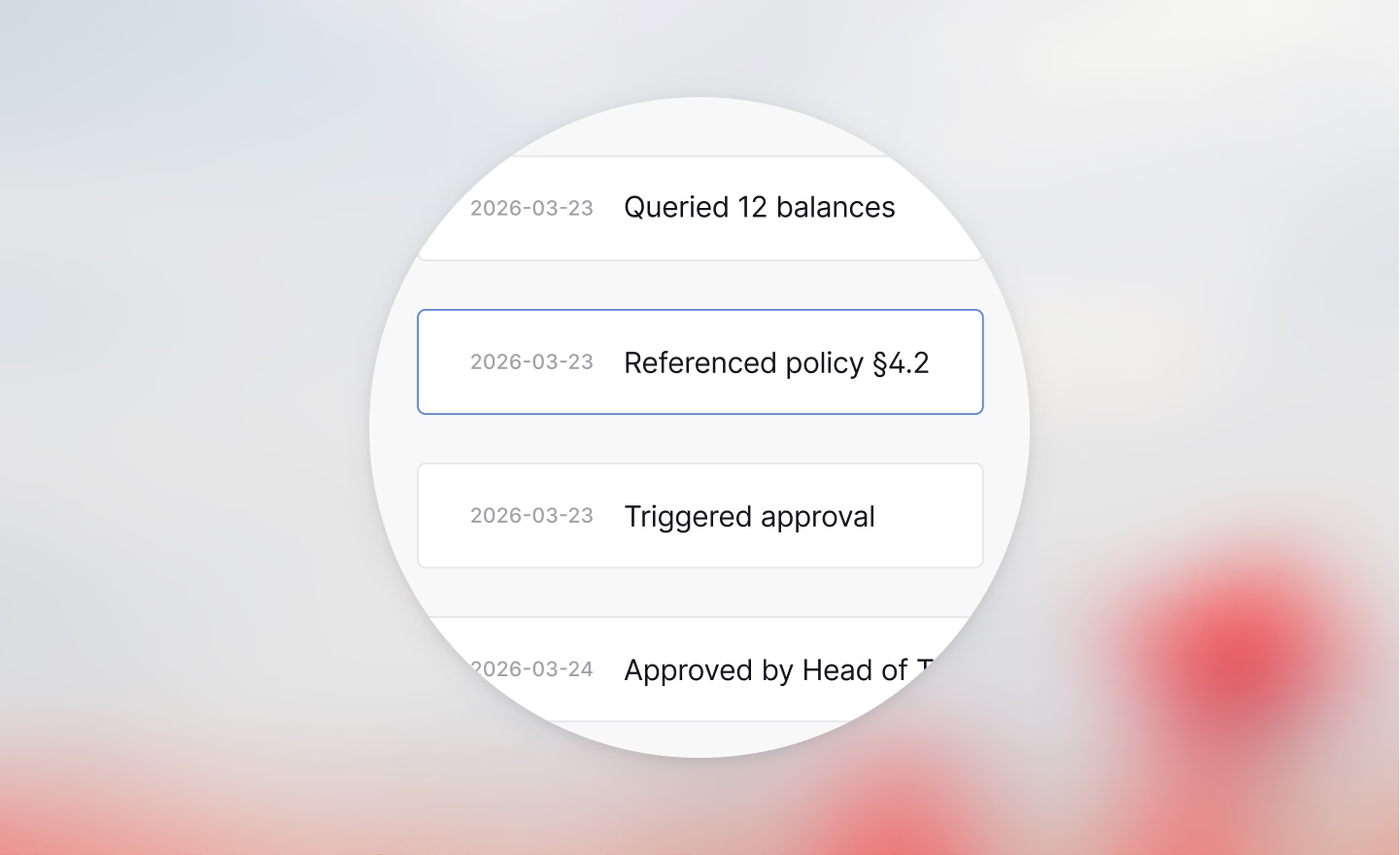

If the treasury policy governs what AI can recommend, the audit chain governs how those recommendations are tracked, reviewed, and approved.

Audit trails record the full sequence: what data was queried, which clause in the treasury policy the AI referred to, and who approved the result. Approval chains enforce how many people review it, and at what thresholds.

Role-based access controls, applied equally to the AI, ensure it can't access data or suggest actions that the user themselves wouldn't be able to access or perform. The result is a chain of accountability you can query at any point, rather than having to reconstruct when an auditor comes knocking. Reliable data is what makes AI in finance useful; the roles, approvals, policy enforcement, and audit trails around it are what make it trustworthy.

Autonomy requires accountability

Counter-intuitively, AI is pushing finance teams back towards first principles around transparency and accountability. Using it correctly forces teams to think carefully about their policies and documentation, because the AI operates within them.

AI can also flag where actual behaviour is diverging from stated policy, even spotting trends before a direct policy contradiction occurs. If your investment policy says maximum 90-day maturities but a growing share of recent placements are pushing close to that limit, you'd want to know before the next board meeting. Continuous policy monitoring like this is hard to do manually, but natural for AI with access to the underlying data.

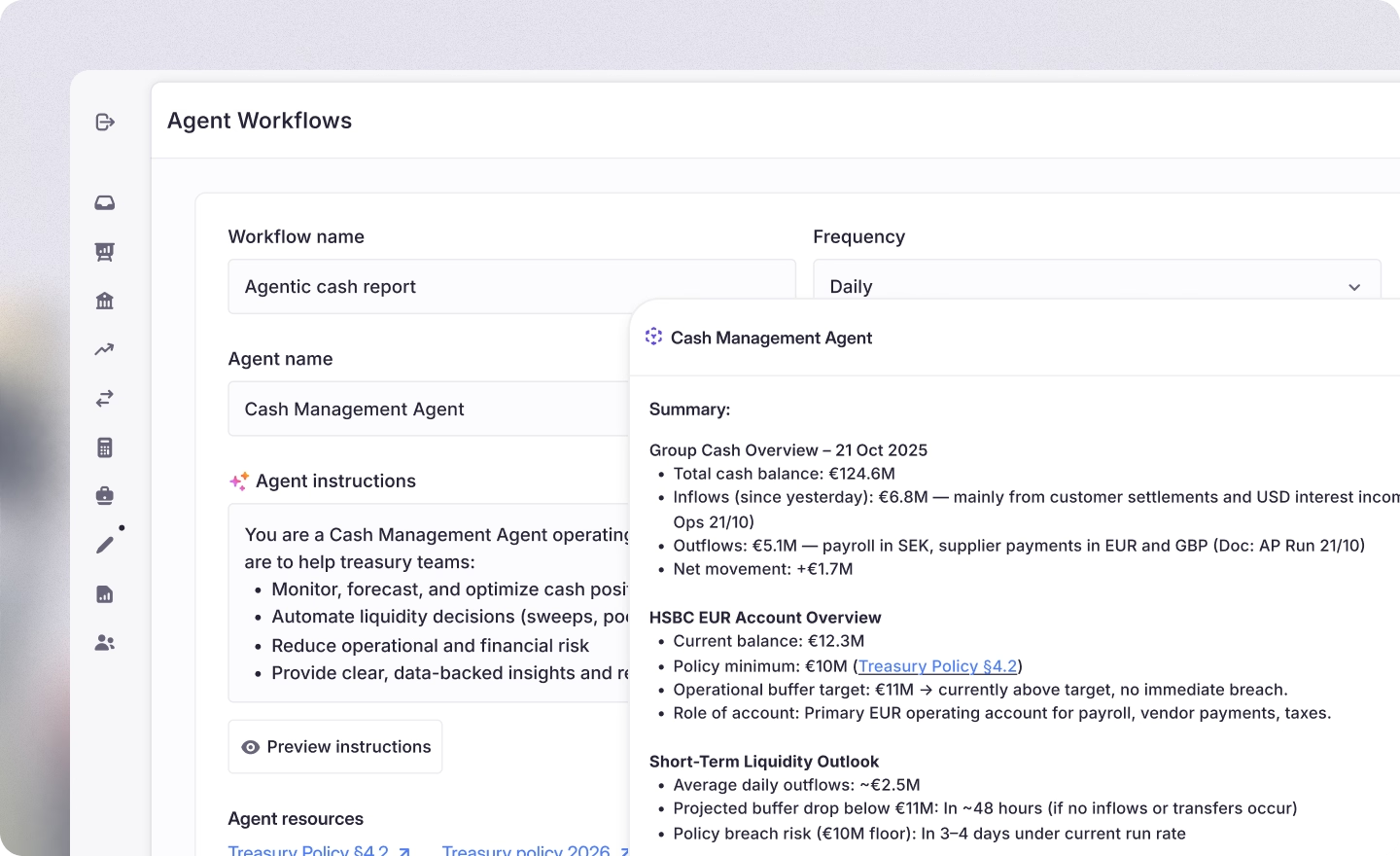

Getting the constraints right is what allows AI to move beyond isolated tasks and handle entire workflows. In practice, an agent working end to end would:

- Reference the treasury policies relevant to the task

- Access the relevant data, subject to its own data permissions and those of the user

- Log every data query and task execution in the audit trail

- Trigger approval chains at preset thresholds

- Flag any policy violations or risks as they arise

- Surface the result for a human to review

The reviewer can accept the recommendation or trace back through every step that led to it. Finance teams define the rules and stay in control, while the repetitive execution shifts to the AI. This frees teams to focus on the decisions that actually require human judgment, which is AI’s core promise.

See it in action

At Atlar, our agents handle cash positioning, payment reviews, and reconciliation, with forecasting coming soon. They operate within user-defined policies, log every execution, and surface results for human review.

If you're interested in seeing how our customers use Atlar's AI today, and what the future looks like, request a demo with our team.

You can unsubscribe anytime.